TikTok has announced that it’s cracking down on videos promoting abnormal and unhealthy eating after a long campaign by disordered eating support groups, politicians and regulators.

More than a billion people worldwide have downloaded TikTok, with the platform having long faced criticism for its laissez-faire approach to content regulation, especially given the youth of its userbase: TikTok is most popular among adolescent and pre-adolescent girls.

Among the most widely condemned content on TikTok are videos promoting unhealthy eating. While videos which outright promote eating disorders such as anorexia and bulimia have already been banned, others more subtly encouraging disordered eating practices have slipped through the net. This includes the What I Eat In A Day trend, which campaigners believe has incentivised dangerously low-calorie diets and a culture of shame.

An official statement from TikTok reads: “We’re making this change, in consultation with eating disorders experts, researchers, and physicians, as we understand that people can struggle with unhealthy eating patterns and behaviour without having an eating disorder diagnosis. Our aim is to acknowledge more symptoms, such as over-exercise or short-term fasting, that are frequently under-recognised signs of a potential problem.”

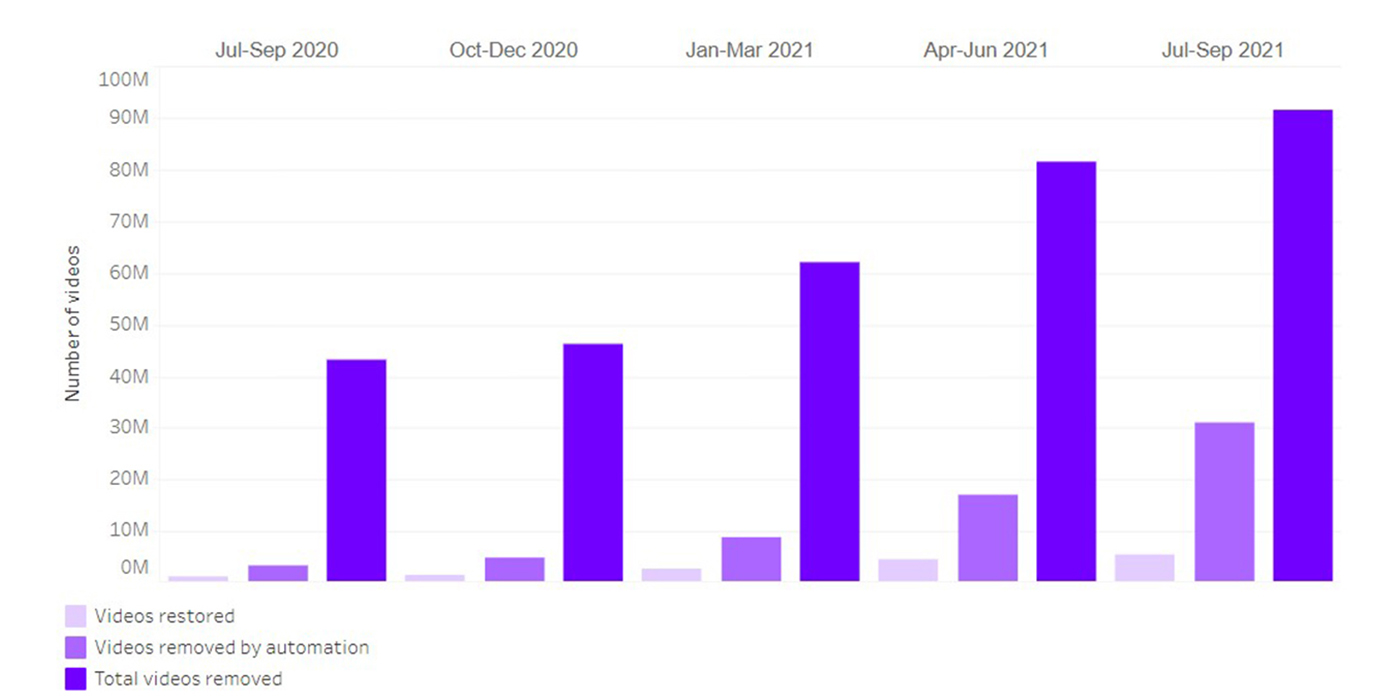

The statement emphasises that TikTok uses “a combination of technology and people to identify and remove violations of our Community Guidelines, and we will continue training our automated systems and safety teams to uphold our policies.”

An official transparency report published on TikTok shows the number of videos which have been removed from the site due to contravening regulations. Just over 89 million videos were taken down in the last six months of 2020; this figure rose dramatically to almost 235 million between January and September 2021, with over 91 million taken down between July and September alone. (These figures do not include the final quarter of the year.)